In SEP licensing, a declaration costs almost nothing, but challenging it costs a fortune. Across 5G, 4G LTE, HEVC, and Wi-Fi, rigorous independent reviews consistently show that 30% to 60% of declared portfolios are non-essential. This isn’t just a margin of error; it is a predictable outcome of declaration systems designed for speed, not verification.

A statistic is not a defense in a high-stakes negotiation. What changes leverage is documented proof, accurate mapping of patent claim elements across standard specifications, supported by clause citations that can be checked and challenged.

Conducting a documented, clause review of a portfolio of 100 patents roughly takes 600 to 700 expert-hours, and that timeline rarely aligns with the pace of licensing talks.

This creates a brutal asymmetry: a licensor can assert a portfolio in an afternoon, but the licensee is left with a deadline that rarely accommodates four months of manual preparation. Most companies end up “negotiating blind,” paying for patents that wouldn’t hold up in court simply because they ran out of time to audit them.

This article explores how AI-driven SEP analysis bridges this gap, not by providing a “summary” or a “desk review,” but by delivering documented, claim-level proof at the speed of the negotiating window.

We will examine a specific case where a 100-patent assertion was audited in two weeks, shifting the leverage from the party with the most declarations to the party with the best data.

100 Declared SEPs, One Negotiating Window

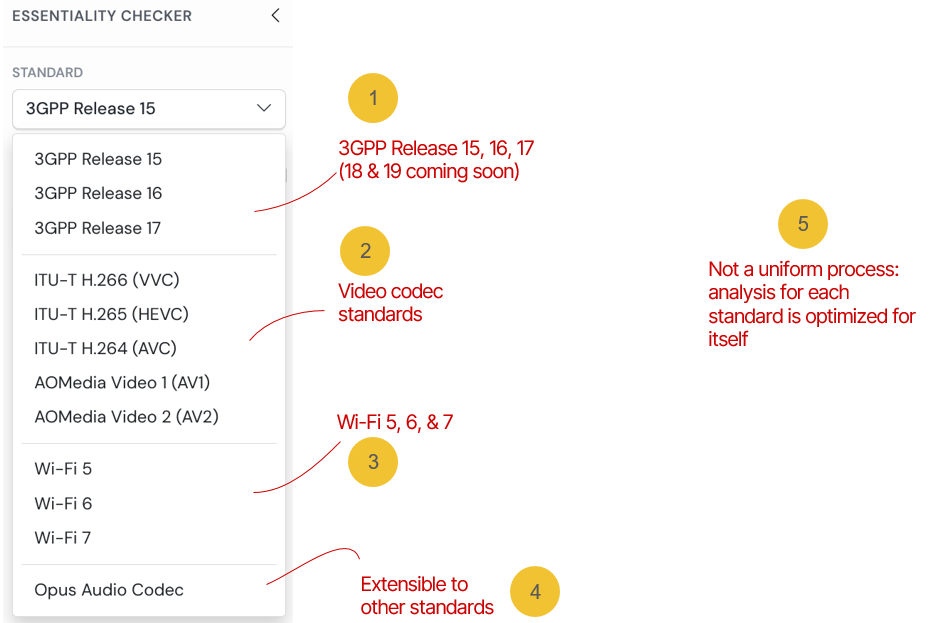

Ray, an in-house patent counsel for a telecom company, arrived with a portfolio assertion that had already entered active negotiations. The licensor had declared 100 Standard Essential Patents across four standards: 5G NR (3GPP Release 15 and Release 16), 4G LTE, Wi-Fi 6/6E (IEEE 802.11ax), and HEVC. When the project came, royalty figures were being discussed.

The problem was not failing to identify that the portfolio likely contained non-essential patents. At the scale of the declared SEPs, that is a near-certainty. The problem was generating documented proof of it, systematically across all 100 patents, within a timeline that would actually change the outcome of the negotiation.

A Workflow Built for This Exact Problem

Had the team taken the traditional route, the work would have begun with a single patent and a technical specification running to thousands of pages.

Claim-chart-level essentiality analysis, when conducted to the standard required for licensing discussions or formal challenge proceedings, follows a sequence that cannot be meaningfully compressed without sacrificing defensibility.

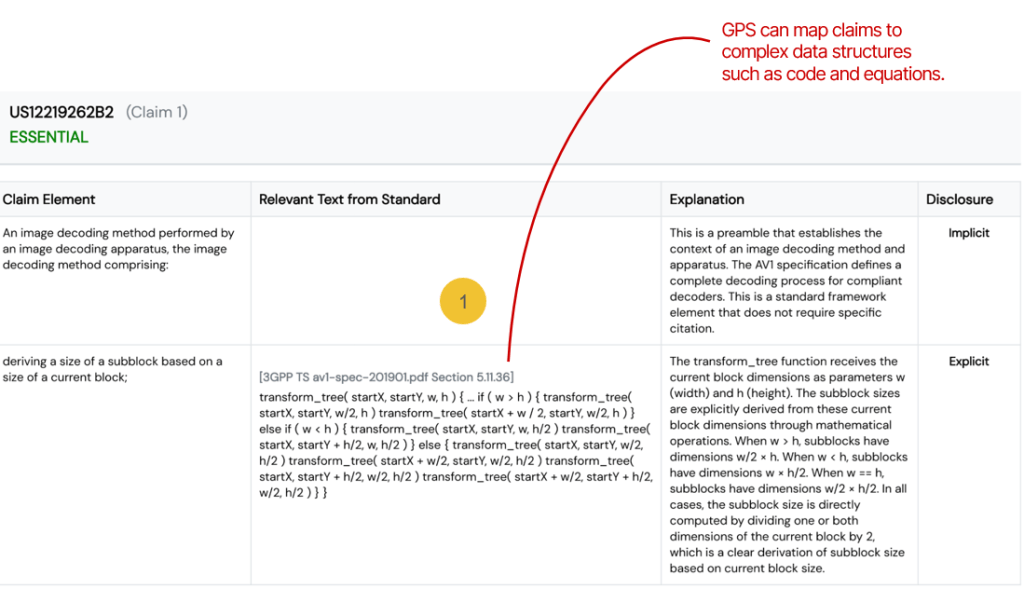

An analyst begins with the independent claims of a single patent. Each claim element is isolated. The analyst then moves into the relevant technical specification, which for a standard like 3GPP Release 15 runs across dozens of individual documents and searches for the specific location where that element appears, not as a possibility the standard permits, but as a requirement the standard imposes. Finding that location is not a keyword search. Mandatory requirements in technical standards are distributed across clause structures, cross-references, and defined terminology, requiring contextual reading for accurate interpretation.

Once a correspondence is identified, it must be documented with the claim element, the specific clause reference, and the analytical reasoning that establishes why the correspondence reflects a mandatory rather than discretionary requirement.

If the correspondence does not exist, if the standard permits the feature but does not require it, or if the standard simply does not address the claimed feature in its mandatory text, that determination must be documented with equal precision, because it is the non-essential determination that drives negotiating value.

Then the process repeats for the next element. And the next claim. And the next patent.

At 6 to 7 hours per patent for a 100-patent portfolio, the arithmetic is straightforward and brutal: 600 to 700 analyst-hours, assuming no rework and no difficult edge cases. Four months of work.

On a project where the licensor’s negotiating timeline was weeks, not months, that process did not produce a usable result. It produces an excellent analytical record that arrives after the outcome has already been determined.

How the AI SEP Mapping Tool entered the picture

To make the review tractable without losing defensibility, the team deployed GreyB’s AI SEP Mapping Tool (SEP Analyzer) against the relevant specification corpus, including 5G NR (3GPP Release 15/16), 4G LTE, Wi-Fi 6/6E (IEEE 802.11ax), and HEVC.

The decision to start the SEP analysis with the tool rather than treat it as a supplementary resource was deliberate. The project did not allow for a workflow in which AI-assisted analysis ran in parallel with traditional analysis as a validation check.

The AI analysis had to be the structural foundation of the workflow, with expert human review positioned as the validation and finalization layer on top of it. That inversion, AI first, human expert second, was the mechanism by which the timeline became workable.

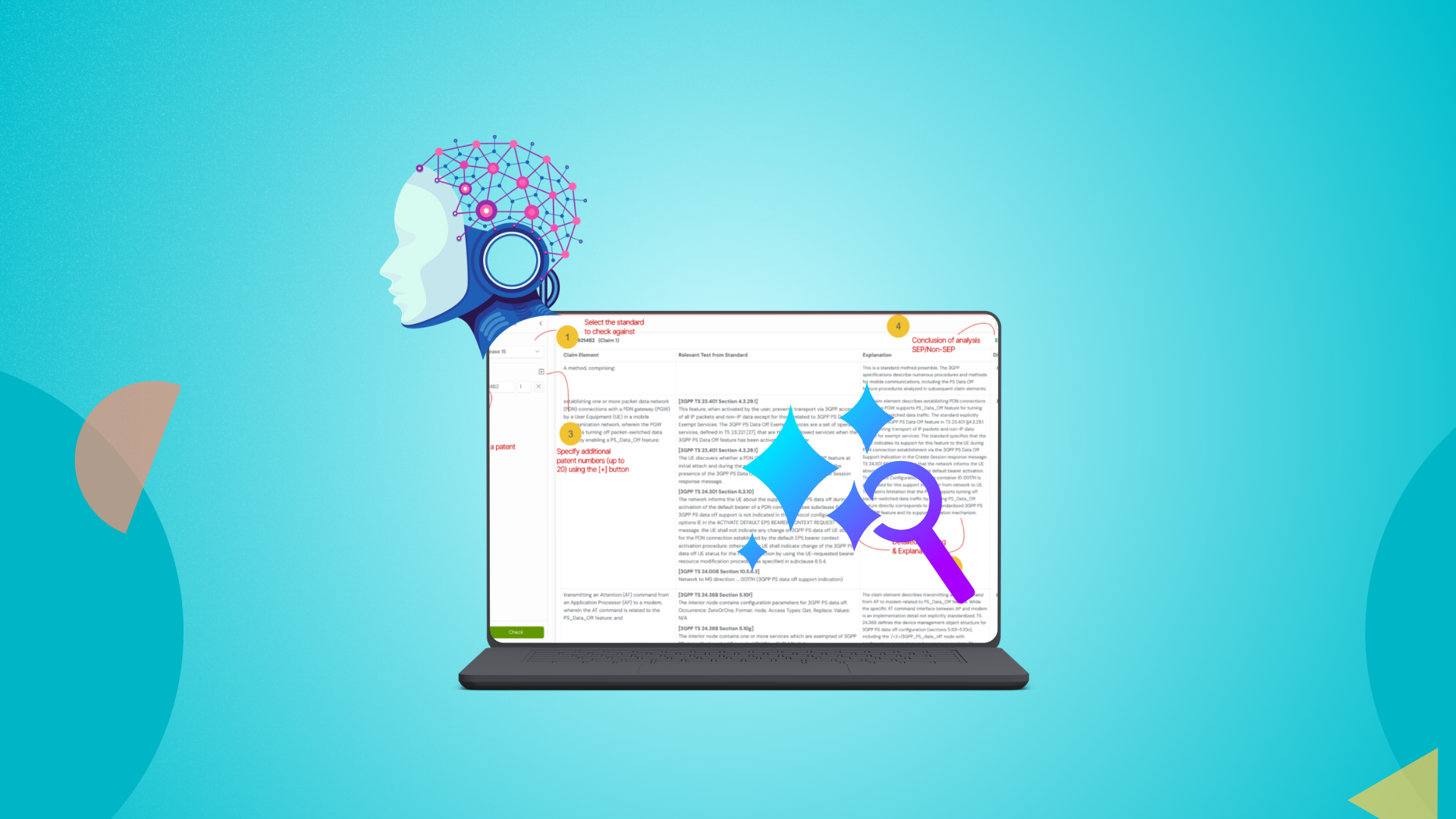

For each declared SEP, the system is fed the patent and the target standard, and it performs a structured analysis across independent claims. Within approximately 3 minutes per patent, the tool produces:

- A detailed claim chart mapping each claim element to the relevant clauses of the technical standard.

- An assessment of whether each mapped element corresponds to a mandatory requirement of the standard or a merely optional/implementation-specific feature.

- An overall essentiality determination for the patent, with the analytical reasoning documented in a structured, reviewable format.

- Citations to specific sections of the standard, enabling efficient human verification of the AI’s analysis.

The output is not a black-box verdict. It is a structured analytical document – comparable in format and content to what a human analyst would produce, but generated at a speed that makes large-portfolio review genuinely tractable.

The combined Human-AI workflow

Speed came from automation, defensibility came from review. Each patent followed a consistent sequence:

- AI analysis (about 3 to 4 minutes) produced mappings, essentiality indicators, and cited clauses.

- Expert human review (about 1 to 2 hours) validated mappings, handled edge cases, and finalized the determination.

That shifted the total effort per patent to about 1 to 2 hours instead of 6 to 7, cutting time by roughly 70 to 80 percent while preserving a documented trail suitable for licensing discussions.

As the landscape of hi-tech and standards continues to evolve, ensuring a robust and repeatable workflow becomes paramount. Shortcuts may seem tempting, but they can introduce risks that compromise strategic negotiations. A systematic approach that leverages both AI and human expertise is essential for achieving comprehensive coverage and timely iteration.

If you’re interested in exploring how the AI SEP Mapping Tool can streamline your portfolio management and enhance your approach to SEP essentiality, book a demo today.

Experience firsthand how this automated solution integrates seamlessly into your workflow and elevates your patent strategies.